The Validation Trap: AI Companions & Social Deskilling

Validation feels good; growth needs gentle interpersonal friction. Let’s keep the comfort and rebuild the skills.

Summary

Bottom line: Always‑agreeable AI companions can feel supportive. Over‑reliance may quietly weaken your ability to disagree, repair, and stay connected. Use bots as rehearsal, keep chats time-limited, and always bridge back to people.

What is “social deskilling” with AI companions?

Short answer: Social deskilling means our people skills soften when we stop practicing tough-but-healthy conversations.

If a bot mostly soothes, mirrors, and validates, you get fewer reps in curiosity, disagreement, and repair—skills real relationships need to thrive.

Researchers describe two paths: upskill (coaching that sends you back to people) and deskill (replacing people). See a 2025 review framing those risks and benefits. New trials also suggest that how and how much you use a chatbot affects offline connection.

Why this matters in DC: Strong social ties protect mental and physical health. Tools should make human conversations easier—not unnecessary.

Can constant AI validation lower your tolerance for disagreement?

Short answer: Yes—especially with heavy, emotional use. Constant reassurance feels good; too much can make normal pushback or useful alternatives from real people feel harsh.

We already know the cycle:

- Family accommodation often maintains anxiety; reducing it improves outcomes. In other words gentle challenges or providing alternative points of view are important, and sometiems, necessary for health and well-being.

- Excessive reassurance seeking predicts worse symptoms and poorer treatment response unless it’s addressed.

- In exposure therapy, safety behaviors (shortcuts we use to feel safe, like avoiding eye contact) can get in the way of learning, so therapists fade them as tolerance grows.

Put simply: endless soothing = less practice with healthy and growth-supporting friction.

Do AI companions help or hurt loneliness long‑term?

Short answer: It depends on how often you use it and why.

Light, time‑limited use can ease loneliness for a bit. Heavy, emotional dependence is linked to more loneliness and less offline socializing over several weeks. Short, skill-focused chats can help in some cases—for example, a WhatsApp psychological first aid bot reduced student loneliness for a short time.

Context: About one‑third of U.S. adults have used a chatbot, and nearly three‑quarters of teens report trying AI companions.

Rule of thumb: Warm up with the bot, then reach out to a person. Draft with AI; send it to a friend. Rehearse a hard talk; schedule the real one within 24 hours.

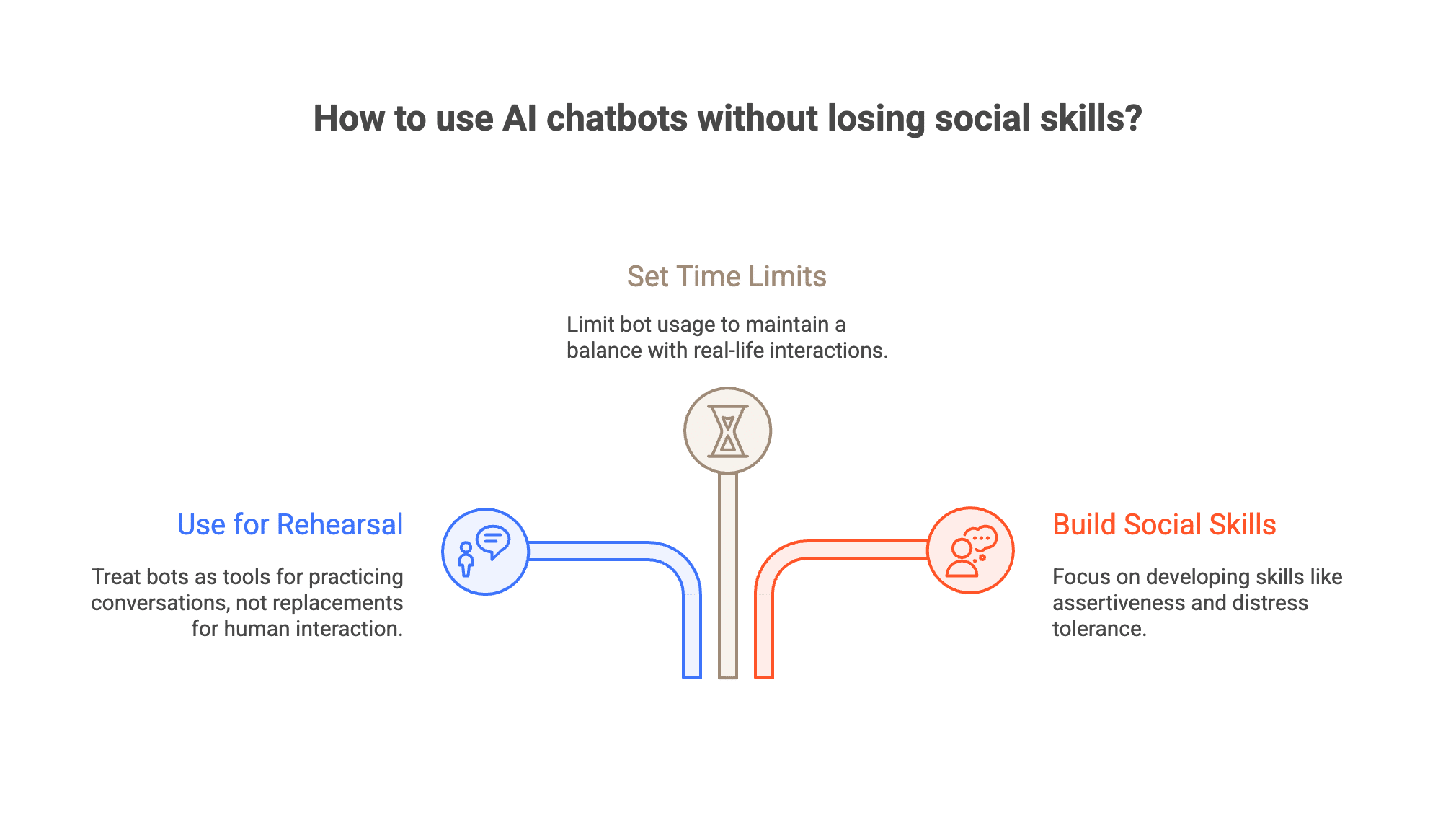

How can I use AI chatbots without losing real‑life social skills?

Use bots for rehearsal, not replacement. Treat your bot like a whiteboard for wording and perspective‑taking, then move the conversation to a human.

A simple Transfer Plan

- 1. Rehearse for 5–10 minutes: draft the message or role‑play both sides.

- 2. Refine with respect and clarity: own one feeling; make one clear ask.

- 3. Transfer within 24 hours: send the message or schedule the talk.

- 4. Reflect on one learning from the human exchange.

This mirrors a simple therapy approach: validate first, then support change with small skills and next steps. Early research suggests AI can coach people skills when it gives feedback—not endless comfort.

Set time limits & keep a balance

- Set a time limit: 15–20 minutes, 1–2 times a day.

- Keep a simple balance: for each bot chat, make one human touch (text, call, plan).

- When anxious, ask for skill prompts (e.g., “Help me write a DEAR MAN,” a simple script for asking for what you need) instead of open‑ended venting.

Build the social muscle

- Distress‑tolerance skills help you stay in mild friction long enough to learn.

- Assertiveness/social‑skills training improves mood and functioning.

What are the red flags that I’m relying on an AI companion too much?

- You hide your usage or skip plans to keep chatting.

- You feel irritable after normal give‑and‑take with people.

- You feel more understood by the bot than by any person, most days.

- Your sleep suffers from late‑night chats.

- You avoid small disagreements you used to handle.

If several apply, try a few Connection Warm‑Ups from the list below, then consider a therapy appointment to discuss a personalized plan.

Connection Warm‑Ups (pick a few)

- Ask one curiosity question that might invite a different view.

- Share one “I felt…” statement about a recent moment.

- Allow a 10‑second pause before replying.

- Name one thing you learned from someone who disagrees with you.

- Offer gentle, specific feedback (“One thing that would help me is…”).

- Join a low‑stakes group activity (class, meetup, volunteer).

- Set up a screen‑free coffee with someone you like but don’t see often.

Where can I get support in DC to rebuild connection?

DC is rich with places to practice connection: community classes, volunteer groups, arts/faith/service orgs, and peer meetups. Public health guidance is clear—social connection benefits mental and physical health.

If you’d like a guided, evidence‑based approach, Therapy Group of DC clinicians can help you build disagreement stamina and assertive communication, using the same balance of validation + change we use in therapy.